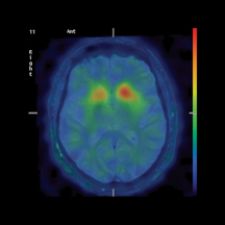

PET-MRI Fusion, Fronto-temporal Dementia.

Image fusion of molecular and anatomic data has proven extremely useful for diagnosis and treatment in radiology, neurology, oncology and cardiology, enabling localization of tumors and lesions, planning for radiotherapy, biopsy and surgery, and in new applications in CT angiography. The use of fusion imaging in several clinical departments has prompted the proliferation of hybrid modalities, such as PET/CT, MR/CT, SPECT/CT and CT/CT, and is fostering a new clinical environment where physicians across several specialties are collaborating for more accurate interpenetration and reporting.

Despite tremendous advances in this growing technology, fusion imaging has a few hurdles to jump before becoming a more prevalent clinical tool. Image registration must still overcome inaccuracies produced by patient positioning and nonrigid deformation. Difficulties with 2-D registration are blocking potential applications in cardiology, while optimizing the use of 3-D in fusion imaging still involves a more sophisticated approach. Plus, implementing connectivity across departments is requisite for multimodality workstations to leverage the advantages of cross-specialty reporting.

Cloudy Visualization Techniques

“There is common consensus that image fusion is becoming increasingly popular because you have a great snapshot of anatomy and various other imaging devices can give you a wonderful snapshot of physiology,” noted Kevin Clark, manager of Marketing and Product Communications, Cedara Software.

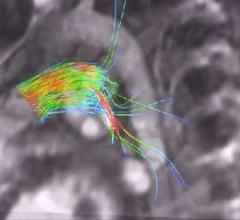

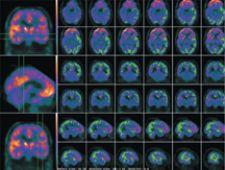

In the last few years, fusion imaging has enabled tremendous clinical milestones, such as using PET images on patients with Alzheimer’s disease to highlight any area of the brain with high concentrations of plaques. Another technique involves ictal-interictal image abstraction overlaid on MRI to identify abnormal bloodflow in the brain due to epilepsy and for epileptic surgery. For biopsy or surgery, fusing images of PET or SPECT on MRI or CT helps localize disease and anatomically guide the surgeon, and multitrace image fusion facilitates the treatment of patients with Hodgkin’s lymphoma.

While techniques for automated rigid registration and retrospective registration enables linear or nonlinear alignment, there are still technical challenges with nonrigid registration, or deformable registration, a method that applies deformation to correct nonlinear changes and organ shifts.

The Labors of Manual Registration

Whereas radiologists see diagnostics as a snapshot in time and do not regularly use deformable registration, oncologists, especially in treatment planning, need to be very exact as to location of tumors and require the precise deformable registration tools.

Although image fusion tools have completely automatic rigid registration, much of the work in deformable registration remains in the hands of the physician, who still must zoom, pan and orient images manually. Multimodality imaging of the head and neck is particularly difficult where nonlinear mismatches often occur due to different arm or head positions.

“Multimodality image fusion in the brain is relatively easy, because the organ is relatively rigid. Automatic image registration and fusion of SPECT, PET and MRI is usually very rapid and effective,” explained S. Ted Treves, M.D., chief, Division of Nuclear Medicine, vice chairman for Information Systems, department of Radiology, Children’s Hospital, professor of Radiology, Harvard Medical School. “Image fusion in the body is not as easy as in the brain as the position of the body may not be the same during the acquisition of each modality. Also, the organs within the body are nonrigid (respiratory motion, cardiac motion, etc.), making exact image registration and fusion more difficult. These limitations can be sometimes overcome by using morphing methods or manual overrides.”

Automation is an enormous obstacle, according to Piotr J. Slomka, Ph.D., Cedars-Sinai Medical Center, Los Angeles, CA, and one of the early developers of image fusion software. “The lack of commercially available, easy-to-use, fully automatic software currently prohibits the widespread acceptance of image registration,” emphasized Slomka.

2-D Difficulties

Another region of difficulty in visualization is with 2-D X-ray projection data for cardiology applications. Perfusion defects that are defined by SPECT or PET could be matched with the location of stenosis obtained by coronary CT angiography, but due to limitations of registration of 2-D X-ray projection data with perfusion data, this is not used clinically. Slomka points out that fully tomographic 3-D CT angiography (CTA) techniques could facilitate practical implementations when fused with a F-FDG PET scan. This could be used to compare perfusion and viability defects with the extent of stenosis.

Optimizing 3-D for Fusion

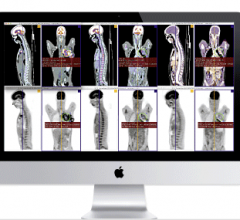

While 3-D display and image fusion are facilitating diagnosis and treatment, there is a learning curve for physicians to optimize image fusion. This requires combining complex 3-D display capabilities such as maximum intensity projection (MIP) movie data with fusion software.

“Maximum intensity projection is a technique for 3-D display. You can do MIP and fusion at the same time, but in my opinion it is icing on the cake. The fusion is used on axial display or multiplanar reformatting, but PET alone is enough to look at lesions,” indicated Slomka.

“However, there are hybrid displays where you have a MIP image and you could point at that lesion in the rotating movie, and that lesion would be then linked to a transaxial display of the slices that show a fused image of both. Now, how to display it is the issue. You can display a rotating PET and that links you to a particular slice and then you put your cursor on the slice. So I think you need these combined hybrid displays to actually make good use of fusion – a more complex display where you merge the 3-D display, such as MIP, with more traditional slices.”

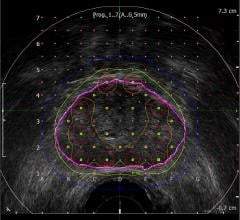

fDM Maps Out Treatment Response

MRI exams are becomingly increasingly important in gaging the effectiveness of treatment in tumors. In conjunction with the MRI exams, physicians are using functional diffusion mapping (fDM), a technology that presents the potential to assist medical professionals to evaluate the impact of anti-cancer drugs and radiation therapy on tumors at an unprecedented rate. Images are coregistered to pretreatment scans and fDM calculates and displays changes in tumor diffusion values for correlation with clinical response. Diffusion MRI is essentially a biomarker for early prediction of treatment response in cancer patients.

In support of this technology, Cedara has a works-in-progress software solution, FUSION Response, for early detection of treatment response in brain cancer that capitalizes on fDM to assess tumor response from cellular mechanisms. A new technique for registering and analyzing differences between MR diffusion-weighted images over time being co-developed by Brian Ross, M.D. and professor of Radiology and Biological Chemistry at the University of Michigan, Cedara I-Response provides quantitative information for analysis of cancer treatment success. Ross developed fDM to track changes in tumors sooner than the traditional two-to-three-month waiting period.

“This technology is really taking visualization to the next level,” said Lorelle Lapstra, chief applications architect at Cedara Software. “Using registration to derive quantitative information from images represents a powerful direction for medical imaging. This is a particularly dynamic way to approach image analysis and is a powerful method to precisely quantify changes to anatomy or physiology over time. Through I-Response, this technology could be used to evaluate the impact of cancer treatment, such as radiation therapy over time.”

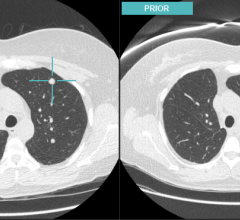

Slomka concurred that software fusion for fusing priors and currents will be important, adding, “it is currently underutilized.”

Seamless Workflow

A common challenge experienced by practitioners working in clinical environments is time wasted from moving between different applications and workstations to perform routine image analysis of different diagnostic data. To bridge the gap between workstations, vendors are introducing image fusion software that is fully integrated into PACS. GE’s Centricity PACS AW Suite enables 2-D and 3-D visualization and volume viewing for diagnosis., and Vital Images’ Vitrea 3-D image software is also integrated with PACS to allow radiologists to seamlessly move from reviewing images to performing MPRs, 3-D and 4-D analysis.

New visualization tools are adding intelligence and are becoming more clinically focused. One such application is a new middleware solution by Cedara Software that allows clinical plug-ins to be tightly integrated into PACS workstations. Aptly named the Cedara Clinical Control Center (C4), users can configure the solution to make image associations based on the user, the modality or study type. In turn, this allows different viewing applications to be launched from a single patient worklist. “Conflicts often arise when different clinical applications are installed on a single workstation. C4 has been carefully designed to minimize these problems and allow system resources to be shared amongst different programs,” said Clark.

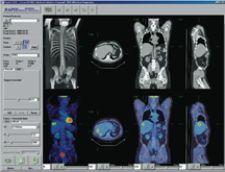

C4’s PET/CT plug-in displays images and information in a PACS-like reading context across dual monitors. “Radiologists reporting PET/CT cases are often looking for information and functionality beyond the capabilities of a standard PACS workstation. Capabilities such as image registration, image fusion, hot spot analysis and SUV are particular to PET/CT imaging. With C4 however, this functionality can be incorporated into a standard PACS workstation,” explained Clark. “In this example, the workflow value of C4 is fairly clear – a radiologist does not need to move from a PACS reporting workstation to a separate PET/CT workstation. Comprehensive diagnostic support can be provided on any PACS workstation throughout the healthcare enterprise. One can similarly see how this technology benefits a radiologist working in areas that require five or six different clinical applications to be used regularly.”

In anticipation of all specialty imaging workstations requiring PACS, HIS, 3-D tools, remote access and interoperability with other departments, physicians and hospitals, HERMES has developed RAPID CD, an application for the display and analysis of PET, NM, CT, MRI and ultrasound exams. Designed for users who do not have direct access to an image server, it features image fusion capability, shows three orthogonal views simultaneously across modalities, has image reorientation, MIP movie data, splash display, volume of interest (VOI) generation and standard uptake value (SUV) calculation, and can be used on a PC.

“The solution is very intuitive and enables us to import several 3-D image sets on the HERMES workstation,” indicated Treves. “The ability of the system to communicate with the PACS helps with the efficiency of the image fusion process. So one can say for this patient, I would like to import the CT and the SPECT, or to import the MRI and the PET. The system allows you to bring these image sets into the HERMES workstation very easily and then proceed with the image fusion. Results become available within a clinically useful time. Image fusion now takes a few seconds. In the past, it took up to an hour or two hours.”

Cross-Specialty Connecting

As radiology, neurology, oncology, nuclear medicine and cardiology tasks overlap, “connectivity, compatibility and cooperation between various clinical departments are essential for the successful application of software-based image fusion in a hospital setting,” according to Slomka.

“The easy availability of multimodality image fusion is promoting and facilitating collaboration and improved communications between nuclear medicine specialists and radiologists,” said Treves. “Overall, in appropriate instances, the results are better as correlation of functional and anatomic findings is more straightforward than in the past. From the referring physician point-of-view, diagnostic reports that address anatomic and functional features can provide more comprehensive information than would be available from separate reports.”

Incompatibility of various DICOM standards and the lack of efficient multimodality PACS is what Slomka calls a “trivial” reason for why fusion software is not as widely used in clinical practice.

“The whole radiology to oncology information transfer is going to take us into new areas,” said Lapstra. “The tools will become available to different departments and the tools will become clinically focused. We are looking at very focused and driven workflow.”

Clinical Applications on the Rise

Experts anticipate that many different clinical applications will arise from the capability to do registration between any type of modality.

“We are investing a lot in segmentation because the image stacks are getting larger and larger. Before you had 200 to 300 images. Now with CT slices you have thousands of images and to ask someone to review the whole case becomes more daunting. That is why there are more CAD algorithms to assist with the diagnosis,” noted Lapstra. “As a result of the whole radiology to oncology information transfer, tools will become available to different departments and the tools will become clinically focused. We are looking at very focused and driven workflow.”

To improve diagnosis capabilities across several modalities, Cedara is currently working on extended capabilities in multimodality registration. “Based on our experience with PET/CT imaging, healthcare professionals are finding increased value in combined imaging such as MR/CT and SPECT/CT as a means to concurrently display both anatomy and physiology,” mentioned Clark. “In similar fashion, CT/CT image fusion can provide relevant diagnostic information in terms of changes to anatomy over time.”

Slomka anticipates that the most important applications for fusion imaging will evolve in radiation therapy for oncology, and as such, he and other researchers at Cedar Sinai are currently developing software to automatically improve disease detection from PET or SPECT/CT.

With medicine in the twenty-first century focusing on preventative measures, it is not too far off the radar to consider fused PET and SPECT imaging being used as a screening tool, much like mammography. “You would have to have highly specific tests. Screening with technology like PET can create more problems, but with fusion you could improve the specificity. It would have to be much more fool-proof;” said Slomka, “more accurate not on detection but on the false-positive aspect to use it as a screening technology.”

Before physicians take the next big leap toward the future, technology must take a few smaller steps – such as improved registration, precise 2-D rendering, optimized 3-D image fusion and cross-speciality connecting – for fusion imaging to truly become a multimodality application.

July 29, 2025

July 29, 2025